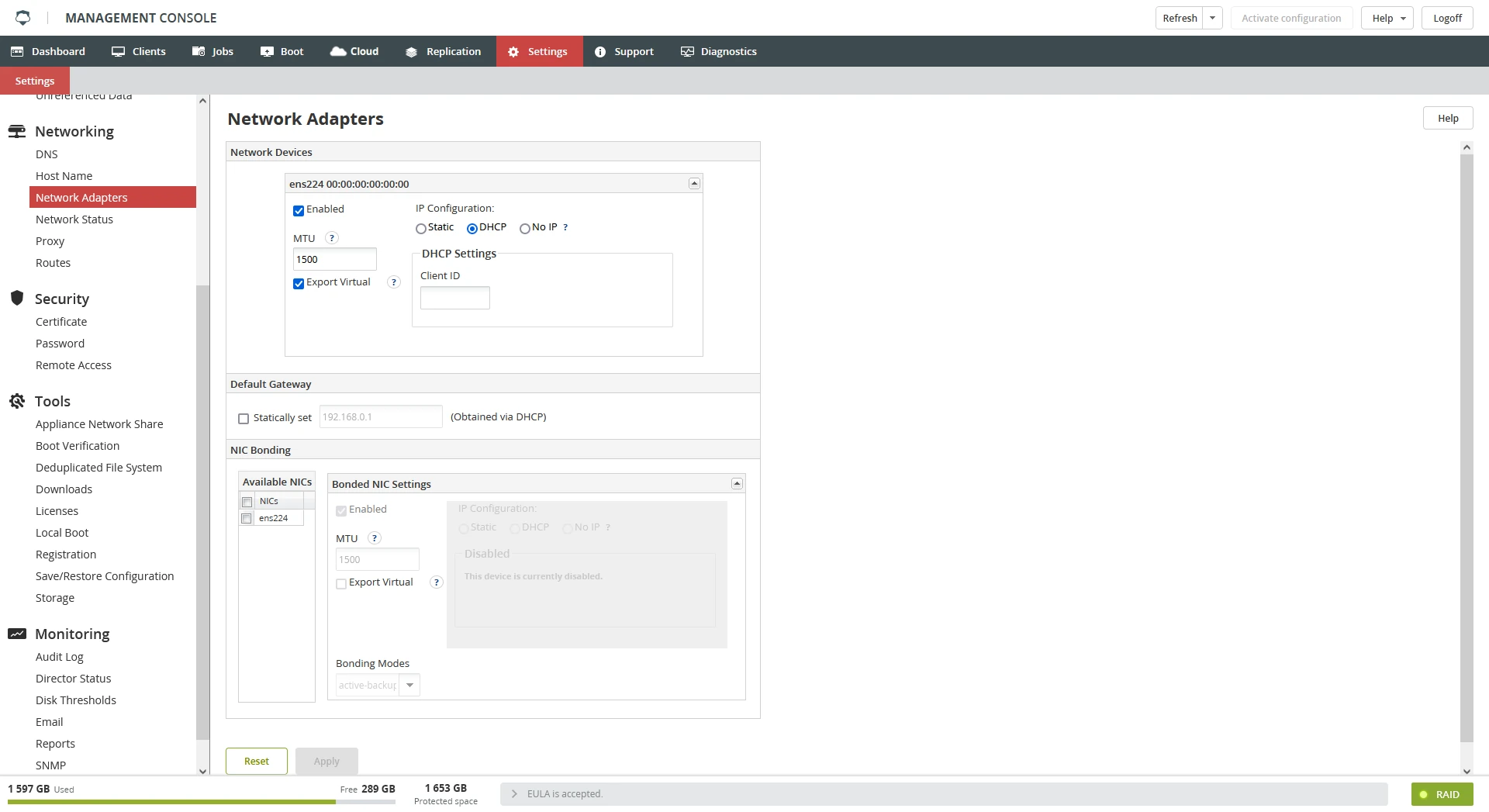

Network Adapters

Overview

Use the Network Adapters section to configure the network interfaces of Backup & Disaster Recovery appliance.

For each interface, you can specify the following:

if the interface is enabled (active on startup)

if the device uses an IP address from a DHCP server or a static IP address

Export Virtual, if selected, allows a VM from the Boot tab to use this NIC

If you would like to use a static IP address, enter IP Address and Netmask in the Static Settings panel.

Client ID in the DHCP Settings group is optional. Client ID is sent to DHCP servers when appliance is started, and it can help the server(s) to configure the appliance network settings. Configuring multiple network devices to be on the same subnet is not supported.

Faster networking of the appliance

Starting from IDR 5.0, there is support for two faster networking methods, 10 Gbit Ethernet connections, and network connection bonding. Each of these has its pros and cons. This document will summarize some of these to help you choose the method that is the best for your environment.

| Pros | Cons | |

|---|---|---|

| 10 Gbit | Easier to setup than bonding. Faster than bonding. Can provide faster throughput for individual clients and for multiple simultaneous backups. | Requires a 10 Gbit NIC, 10 Gbit port on the switch, and adequate wiring |

| NIC bonding | Software only solution allowing the existing NICs to work together. Even if more NICs need to be added, it is usually cheaper than adding a 10 Gbit NIC. Enhanced fault tolerance (one bad network cable does not completely disconnect the machine from the network). | Not as fast as the 10 Gbit connection. Harder to set up. Most modes require support by the switch. Will not make any one client’s connection much faster, but does allow multiple simultaneous clients to be faster (for details, see the NIC Bonding setup section). Clients past an IP gateway might see no speed increase. |

Network monitoring with ntop command

ntop must be started first by the ntop command at the command line. Setting a password will be required. Once the password is set, the session must be stopped using Ctrl+C.

This is only required the first time

ntopis to be started.

Once the password is set, the ntop service can be started with the command service ntop start.

The service will not be started by default. To start the service at boot edit

/etc/rc.local.

ntop can now be viewed at http://{cfa-ip}:3000, where {cfa-ip} is IP address of the appliance.

Due to limitations of ntop, the URL may require viewing with private browsing. Alternatively, clearing the browser cache will also clear up some issues.

10-Gbit networking setup

Setting up a 10 Gbit connection requires no different settings in the software than does setting up 1 Gbit.

To confirm that the network is connected at 10 GB/s, on the Settings tab, click Network Status in the Networking group. Look for a network connection with the speed shown as 10000 (10000 MB/s ~ 10 GB/s).

NIC bonding introduction

In appliances with firmware version 8.7.0–8.7.5, NIC bonding settings are not available for changing via the Management Console (if they were configured before the update) or not shown at all (if they were not configured before the update).

To change these settings, update the appliance firmware to version 8.8.0 or later.

NIC bonding (also known as port trunking) allows multiple network interface cards to share one IP address and work simultaneously on one network. Bonding provides fault tolerance, and depending on the mode used might provide some load balancing to improve throughput.

There are several modes of bonding supported by appliance. We generally recommend balance-alb or 802.3ad, but your specific needs might be better served by one of the other modes. All of these modes provide some fault tolerance, and some provide some level of load balancing. The first consideration when choosing a mode is what modes (if any) are supported by the switch that appliance will be connected to.

| Mode | Pros | Cons |

|---|---|---|

| active-backup | Fault tolerance. No switch support required. | No improvement to the backup and restore speed |

| balance-tlb | Fault tolerance. NICs shared for send only (restores). No switch support required. | No improvement to the backup speed |

| balance-alb | Fault tolerance. Balance load between NICs for both send and receive. No switch support required. | Can only balance load for local network segment |

| balance-xor | Fault tolerance. Load for transmits spread across NICs. | Balanced for transmit only, so can only help speed multiple simultaneous restores. Requires switch support. No improvement to backup speed. |

| balance-rr | Fault tolerance. Each packet sent from the next NIC (round-robin). If the switch is capable, it can send packets to appliance in the same round-robin way. If the switch can spread packets across the interfaces, throughput from one client can be faster than one NIC alone. | Packets might arrive out of sequence, which will cause some packets to be retransmitted. Overall throughput of bond connection lower than the sum of the connections. Requires switch support. |

| 802.3ad (LACP) | Fault tolerance. Packets can be sent and received through different NICs for different clients. Overall throughput of bond connection can be nearly the sum of all connections. | The maximum throughput of one client equals to the throughput of one NIC. Requires switch support. |

Turning on the bonding

The bonding is configured from the appliance Management Console, so when setting up a new appliance, first configure the networking with only one interface. To turn on the NIC bonding, follow these steps:

Sign in to the appliance Management Console, go to System › Network Adapters.

In the NIC Bonding group, select NICs to be included in the set.

Select Enabled.

In the Bonding Modes drop-down list, select the mode.

A reminder will be shown for those modes that require special support at the switch.

Configure the IP address, or select DHCP, and then click Apply.

Go to Reboot, and click Shutdown.

If the selected bonding mode requires switch support, turn on the bonding (port trunking) for the ports the appliance is connected to in the switch configuration, then turn on the appliance.

Modes not requiring support from the switch

Active-backup – Fault tolerance. If the active interface loses connection, a backup interface is brought online. This mode only provides fault tolerance. Only one interface is ever active.

Balance-tlb – Fault tolerance and load balancing (for transmit only). In this mode data is transmitted out all of the interfaces in the bond set. Only one interface will receive data.

Balance-alb – Fault tolerance and load balancing. This mode is balance-tlb, with the addition of modifying ARP replies so different clients will talk to different interfaces. Each client machine can only talk to one interface, but multiple clients traffic can be spread across multiple interfaces.

Modes that require a switch that supports bonding

balance-xor – Fault tolerance and some load balancing. The source and destination MAC addresses are XOR to choose which interface to send traffic through. All traffic going through one gateway will share one interface on appliance.

802.3ad (LACP) – Fault tolerance and load balancing. Client traffic through a gateway will share one interface on appliance. Connections must all use the same speed and duplex. One client will only be able to use the bandwidth of one NIC. Multiple simultaneous clients can be spread across the NICs. Two clients might share one NIC, even if others are available (see How the network layout affects bonding performance for details).

balance-rr – Fault tolerance and load balancing across multiple interfaces. If the switch supports the balance-rr mode traffic into and out of the appliance will be balanced across the connections. This is the only mode in which traffic from one client can use more than one NIC. This means that packets can arrive out of order, which can cause higher CPU use on the computers at both ends, and packet retransmissions. This will cause the TCP congestion control to limit the speed to less than the sum of the connections in use. With four 1 Gbit interfaces bonded on appliance, a single client on a fast enough connection (also using port trunking, or a single faster connection, like 10 Gbit), the connection might only achieve 2 Gbit/s.

Choosing between balance-rr and 802.3ad

If your switch supports both the balance-rr and 802.3ad (also known as LACP) modes, we recommend that you use one of these modes, as they provide the best benefit to throughput. Generally, we recommend 802.3ad but the trade-offs between these two modes are listed discussed below.

802.3ad allows the maximum throughput of the bond to be nearly the sum of the speed of the component connections. One client can only use the bandwidth of one NIC at a time, so with any number of 1 Gbit/s links in the bond, one client will only be able to use 1 Gbit/s. To make use of the bandwidth of more than one NIC, more than one client must be backed up simultaneously. To fully saturate the bond usually requires at least as many clients as there are NICs in the bond. The MAC address of the client machines determines which NIC they use, so some may share NICs even if other NICs are free.

balance-rr allows a single client to use more bandwidth than one of the component NICs. The maximum possible throughput of the bond is lower than when using’ 802.3ad, but the maximum throughput that a single client can use is higher than the maximum that a single client can use in 802.3ad.

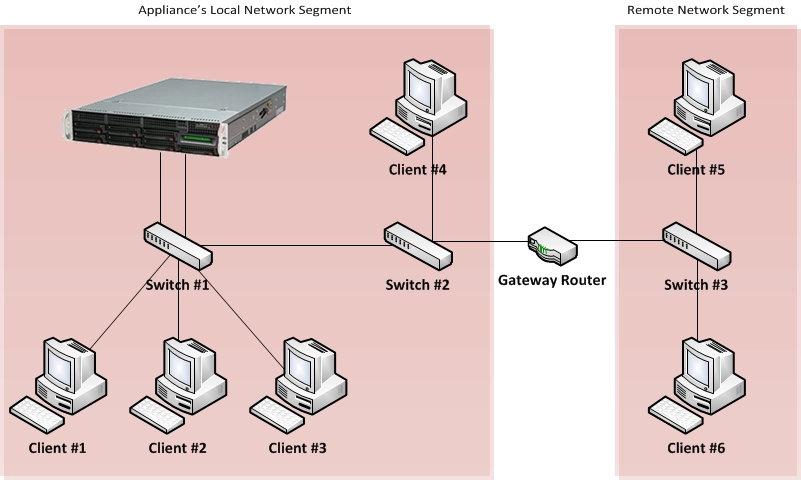

How the network layout affects bonding performance

With the exception of the balance-rr mode the modes that do load balancing (like balance-xor, and 802.3ad) select which interface to send packets based on the MAC address of the sender. Network traffic going into appliance from the clients will not be evenly balanced across the interfaces. All traffic from one source will have the same sending MAC address, and so will all go to one interface on appliance.

The throughput possible between two machines is only as fast as a single link in the set. For example, on an appliance configured with four 1 Gbit NICs with a client computer connected on a 10 Gbit connection, communication between them will not be faster than a single 1 Gbit link. This is because all packets coming from that client will have the source same MAC address and will therefore always be sent to the same interface on appliance.

Selecting the destination interface based on the sender’s MAC address makes it easy for the switch to determine very quickly where a packet should be routed, but does introduce some limitations. For example, in the diagram below traffic from server 5 and server 6 passes through a router to get onto the appliance local network segment. This will cause all packets from those servers to have the same source MAC address (that of the router), so they will share one link to appliance. Servers 1-4 are on the same network segment, and so they will each have a unique source MAC address.

The balance-rr mode uses a different scheme to decide which interface to send data to and data from a single source can be spread across multiple interfaces on appliance. This allows traffic from Server 5 and Server 6 to use more than one interface on appliance, but has the disadvantage that packets will often be delivered out of order in both directions. This will cause the TCP congestion control to limit the speed to less than the theoretical max. With four 1 Gbit interfaces bonded on appliance, a single client on a fast enough connection (also using port trunking, or a single faster connection, like 10 Gbit); the connection might only achieve 2 Gbit/s.

See further information on Wikipedia and Linux Foundation.